As we explained last week, the method we apply to Westminster polling can also be used to estimate the underlying balance of opinion in Scotland. This involves pooling all the currently available polling data, while taking into account the estimated biases of the individual pollsters ('house effects'). Our approach takes a conservative view of sudden movements in the polls, in part because we produce weekly, rather than daily, estimates of public opinion. This could be seen as a weakness, as our estimates react more slowly to new information. It does, however, mitigate the influences of random noise in the polls and short-term bounces. Of course, there is a possibility that we will miss real last-minute movements in opinion.

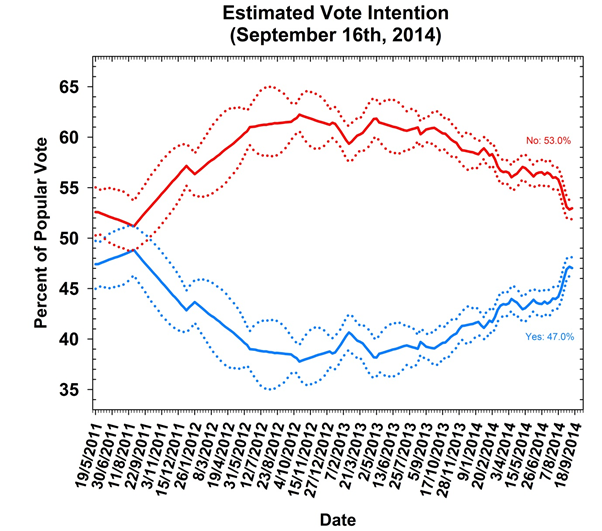

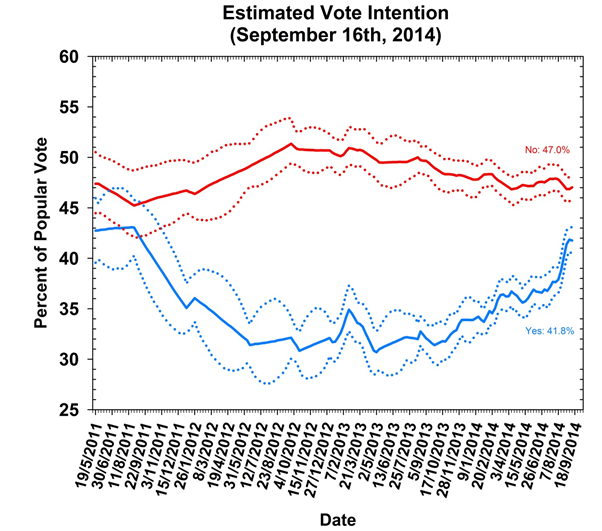

Our data cover the period up to Tuesday September 16. The last ten days of the campaign have seen public opinion stabilise – following a surge in support for the Yes camp over the previous month or so. This week’s estimates put No on 53.0% (up 0.6 points since our last report) and Yes on 47.0% (down 0.6 points). These results are consistent with the levelling-off in support we have seen in the polls.

At this late stage of the campaign, some are quick to talk about 'herding' by pollsters, but there is no evidence that pollsters are tweaking their methodologies to avoid being at odds with their competitors. (There also is no evidence that they are not releasing results that are at odds with the polling consensus, the so-called 'file-drawer effect' in scientific publishing.) A mundane explanation is rather more likely, that public opinion has settled – with smaller differentials in non-response rates among supporters on both sides – and that the polls are converging on the result. While the race is still close, No is the favourite with a clear lead.

We see the same stabilisation in voting intentions if we look at the trend for unadjusted responses below (undecided voters are not plotted on our graph). As we showed last week, No support has remained steady in the polls for some time, and movements in the headline figures appear to have largely been driven by Yes winning over 'don't knows'. With support for both Yes and No static in the last week or so, it may be that the pool of potential switchers has substantially diminished – and the campaigns are now fighting over scraps. While our estimates suggest 11.2% are still undecided, the recent upward trend in support for Yes presumably means that the remaining undecided voters are more evenly split between Yes and No. As it stands the Yes campaign still has some way to go to steal it on the finish line.

Whatever the outcome, it is clear that opinion in Scotland is divided. It remains possible that the polls might be wrong – with very high turnout and differential response rates (or the 'shy Noes') being potential confounding factors for pollsters. Indeed, past evidence suggests an underestimation of No support is somewhat more likely. What we know for sure is that once random noise is accounted for, the polls give a clear signal of who is ahead. Of course our approach is not without uncertainty.

The measurement of 'house effects' is based on differences between pollsters across the entire campaign, but it is possible that these have changed in the last few weeks as the electorate has become increasingly engaged. Most crucially, the confidence of our estimates assumes the polls are, on average, not systematically biased in favour of either Yes or No, which we cannot be sure is true. Despite every final poll pointing towards a No vote, the true balance of public opinion on Scottish independence could be different. A Yes vote might prompt the sort of inquiry among pollsters that followed the 1992 general election disaster (where polls put Labour well ahead right up to Election Day) – though the breakup of the United Kingdom might be a little more pressing than a debate over survey methodologies.

This article first appeared on Southampton University's Polling Observatory blog.

This is a Scottish independence special of our regular series of posts that reports on the state of support for the parties in Westminster as measured by opinion polls. By pooling together all the available polling evidence we can reduce the impact of the random variation each individual survey inevitably produces. Most of the short term advances and setbacks in the polls are nothing more than random noise; the underlying trends – in which we are interested and which best assess the state of public opinion – are relatively stable and little influenced by day-to-day events. Further details of the method we use to build our estimates of public opinion can be found here.